Binary Fractions

How they work

As a programmer, you should be familiar with the concept of binary integers, i.e. the representation of integer numbers as a series of bits:

| Decimal (base 10) | Binary (base 2) | |||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | ⋅ | 101 | + | 3 | ⋅ | 100 | = | 1310 | = | 11012 | = | 1 | ⋅ | 23 | + | 1 | ⋅ | 22 | + | 0 | ⋅ | 21 | + | 1 | ⋅ | 20 |

| 1 | ⋅ | 10 | + | 3 | ⋅ | 1 | = | 1310 | = | 11012 | = | 1 | ⋅ | 8 | + | 1 | ⋅ | 4 | + | 0 | ⋅ | 2 | + | 1 | ⋅ | 1 |

This is how computers store integer numbers internally. And for fractional numbers in positional notation, they do the same thing:

| Decimal (base 10) | Binary (base 2) | |||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 6 | ⋅ | 10-1 | + | 2 | ⋅ | 10-2 | + | 5 | ⋅ | 10-3 | = | 0.62510 | = | 0.1012 | = | 1 | ⋅ | 2-1 | + | 0 | ⋅ | 2-2 | + | 1 | ⋅ | 2-3 |

| 6 | ⋅ | 1/10 | + | 2 | ⋅ | 1/100 | + | 5 | ⋅ | 1/1000 | = | 0.62510 | = | 0.1012 | = | 1 | ⋅ | 1/2 | + | 0 | ⋅ | 1/4 | + | 1 | ⋅ | 1/8 |

Problems

While they work the same in principle, binary fractions are different from decimal fractions in what numbers they can accurately represent with a given number of digits, and thus also in what numbers result in rounding errors:

Specifically, binary can only represent those numbers as a finite fraction where the denominator is a power of 2. Unfortunately, this does not include most of the numbers that can be represented as finite fraction in base 10, like 0.1.

| Fraction | Base | Positional Notation | Rounded to 4 digits | Rounded value as fraction | Rounding error |

|---|---|---|---|---|---|

| 1/10 | 10 | 0.1 | 0.1 | 1/10 | 0 |

| 1/3 | 10 | 0.3 | 0.3333 | 3333/10000 | 1/30000 |

| 1/2 | 2 | 0.1 | 0.1 | 1/2 | 0 |

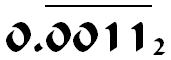

| 1/10 | 2 | 0.00011 | 0.0001 | 1/16 | 3/80 |

And this is how you already get a rounding error when you just write down a number like 0.1 and run it through your interpreter or compiler. It’s not as big as 3/80 and may be invisible because computers cut off after 23 or 52 binary digits rather than 4. But the error is there and will cause problems eventually if you just ignore it.

Why use Binary?

At the lowest level, computers are based on billions of electrical elements that have only two states, (usually low and high voltage). By interpreting these as 0 and 1, it’s very easy to build circuits for storing binary numbers and doing calculations with them.

While it’s possible to simulate the behaviour of decimal numbers with binary circuits as well, it’s less efficient. If computers used decimal numbers internally, they’d have less memory and be slower at the same level of technology.

Since the difference in behaviour between binary and decimal numbers is not important for most applications, the logical choice is to build computers based on binary numbers and live with the fact that some extra care and effort are necessary for applications that require decimal-like behaviour.

© Published at floating-point-gui.de under the Creative Commons Attribution License (BY)